In the last few posts, I have discussed the global population (both current and future), as it relates to both economic input and contribution to climate change. I promised to ask a friend who specializes in demographics for his comments. I am very thankful that – fortunately for all of us – he said yes. What follows is his explanation of the various factors that contribute to the rise or fall of population.

How Does Population Decline?

By Jim Foreit

The Climate Change Fork blogs of December 24, 2013 and January 2, 2014 asked why the prospects for world population growth varied so widely – from today’s population of some 7 billion people to the possibility of anywhere between 3.2 and 24.8 billion in 2150. Making predictions more than 50 years out is risky. In this blog, I will discuss the determinants of that growth and limit the discussion to the year 2050, when world population is predicted to grow to roughly 8 – 10 billion.[i]

Barring massive migration to extra-terrestrial planets, global population growth will continue to be determined by the difference between births and deaths. If there are more births than deaths, the population will grow. If there are more deaths than births, the population will shrink. It’s as simple as that.

Let’s look at deaths first: Death rates have been declining for more than a century, even taking into account the overall aging of the population and the ongoing HIV epidemic. Purposefully containing population growth by increasing deaths would require us to resort to the apocalyptic factors of war, pestilence and starvation; strategies which few would advocate.

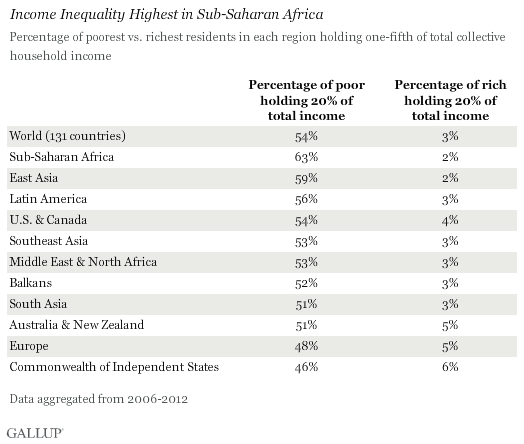

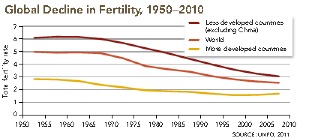

Therefore, world population growth can be slowed or reversed only by reducing births, or as demographers refer to it, fertility. Since only women are capable of giving birth, we can focus our calculations on that one gender. It is inevitable that some children will die before adulthood, so to compensate for these losses, every woman must have an average of 2.1 children in her lifetime (this is called the total fertility rate or TFR), in order to replace herself and her partner. At these replacement fertility rates, population levels eventually stabilize. This will not happen overnight because there are many more potential mothers (girls under the age of 15) today than there are adult women, and if they all have 2.1 births, the population will continue to grow for another generation. If TFR drops below 2.1, the population will eventually shrink; this was the motivation behind China’s one child policy. Currently, TFR ranges from about five children in Sub-Saharan Africa to less than two in North America, Europe, China, and Japan. Global TFR was approximately 4.9 in 1950 and approximately 2.6 in 2010.

What drives fertility? At its root, fertility is biology: women may or may not get pregnant, and once pregnant they may or may not take that pregnancy to term. There are three principal factors or behaviors under a woman’s control that determine whether or not she gets pregnant, and one main factor that determines whether pregnancies result in a live birth. These are called proximate determinants of fertility. Socio-economic factors that influence population growth do so by acting on the proximate determinants and are called distal determinants of fertility.

The Proximate Determinants of Fertility [ii]

If she does not die in childbirth or from other causes, the average woman will have 35 years in which she can bear children – from menarche (roughly age 15, although this number has recently been seen to be declining) to menopause (roughly age 49). The earlier she has her first child, and the more rapidly she gets pregnant again, the more children she will have in her lifetime. She can delay her first birth by delaying sexual activity (traditionally by postponing marriage), and/or by using contraception; she can delay subsequent pregnancies by breastfeeding her previous child (exclusive breastfeeding suppresses ovulation by 6 months on average) and/or by using contraception. If an unwanted pregnancy occurs, she can terminate it with an abortion.

At the population level, these are the four proximate determinants of fertility: percentage of women 15-49 married or sexually active, percentage of women using contraception (and the type of contraception used, as some methods are more effective at preventing pregnancy than others), average length of breastfeeding, and the abortion rate. The transition from high to low fertility has occurred by delaying the first birth and replacing “natural” constraints like breastfeeding (which has actually declined) with the more effective constraints of modern contraception and abortion.

Distal Determinants of Fertility

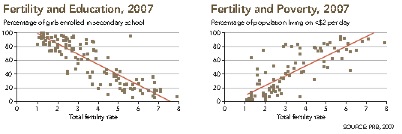

History clearly shows that urbanization, declining infant mortality rates, and increases in women’s education and employment are associated with sustained fertility decline. Why? These distal determinants must be mediated by their impacts on one or more of the proximate determinants – that is, marriage, breastfeeding, contraception and/or abortion. Often they interact synergistically for greater effect.

Let’s begin with female education. In many cultures, school attendance is seen as incompatible with marriage, so keeping girls in school raises the marriageable age and shortens the total time possible for childbearing. In high-fertility settings in sub-Saharan Africa, a one-year increase in age at marriage may be associated with a TFR decline of one birth or more.

However, the single most important factor in fertility change is change in demand for children – that is, how many children a woman or couple wants to have. In economic terms, as the cost of children increases, the demand for children decreases.[iii] Here’s why:

In traditional rural agricultural settings, children begin working at an early age: they contribute to family income and support their parents in old age. Hence, value flows from children to parents, and fertility is high. High levels of infant mortality contribute to parents’ desire for large numbers of children to ensure that at least a few survive into adulthood; as infant mortality declines, fewer children are needed, so the desired number of children declines. This, however, does not immediately translate to a smaller population. In the short term, without increased use of contraception and/or abortion, fertility remains high and population growth rates accelerate. Mortality almost always declines before fertility, so when no contraception is available, it is very difficult to achieve desired family size. In developing countries, ideal family sizes are lower than attained fertility.

With migration to cities, children contribute less to family income. To succeed in an urban environment, families pay more for their children’s food, clothing, education and health care, and income now flows from parents to children. Thus, urbanization leads to lower desired family size, and to the extent that methods and services are available, women can act on their fertility desires and prevent/delay pregnancy through contraception or terminate an unplanned pregnancy through abortion. Urbanization also increases women’s educational and employment opportunities, encouraging them to postpone marriage as we saw above, as well as to use contraception so that they can work outside the home and contribute to family income. In fact, the more women’s income grows, the greater the cost of taking time off to raise children. This often translates into greater use of contraception and abortion and lower fertility. When I lived in Korea in 1978, a piano was seen as the ultimate family status symbol, and it was said that middle class families in Seoul were foregoing having a third child in order to instead buy a piano for the first two!

The table below illustrates the distal determinants between a country in the early stage of development – Nigeria, a country in a middle stage of development – India, and a fully developed country – the United States. In the US, fertility differences across the factors are extremely low, so only contraceptive prevalence (the percent of women of fertile age using contraceptives, or CPR) TFR, and infant mortality are shown.

| Factor | Nigeria 2008 | India 2005-6 | USA 2010 | |||

| TFR | CPR | TFR | CPR | TFR | CPR | |

| National level TFR/CPR | 5.7 | 9.7 | 2.6 | 48.5 | 1.9 | 77 |

| Urban | 4.7 | 16.7 | 2.1 | 55.8 | – | – |

| Rural | 6.3 | 6.5 | 3.0 | 45 | – | – |

| Highest Wealth Quintile | 4.0 | 22.3 | 1.8 | 58 | – | – |

| Lowest Wealth Quintile | 7.1 | 2.5 | 3.9 | 34.6 | – | – |

| Women W/No Education | 7.3 | 2.6 | 3.6 | 45.7 | – | – |

| Women W/Secondary and above Education | 4.2 | 18.9 | 2.1 | 50.3 | – | – |

| National level Infant Mortality | 91 | 57 | 6 | |||

Although TFR is much higher in Nigeria than in India, and CPR is much higher in India than in Nigeria, the same pattern of lower fertility in urban areas, among the better educated and wealthier is evident in both countries.

Manipulating Fertility Decline: Can We Speed Up the Process?

The population-development debate is as old as Malthus (1798). Margaret Sanger organized the first World Population Conference in 1927. The UN took up the baton decades later, holding conferences in 1954, 1965, 1974, 1984 and 1994.[iv] Debate at the conferences centered on the need for family planning. Some argued that development was sufficient (“development is the best contraceptive”) to drive down fertility because populations would adopt fertility control without the need for organized programs. Others argued that development had to be accompanied by strong family planning policies and programs. While academics argued, countries and development donors took action: family planning programs were launched in India in the 1950’s, in East Asia (Korea, Taiwan, Thailand and Indonesia) between 1960 and 1970, in Latin America in the late 1970’s and 1980s, and sub-Saharan Africa in the 1990s.

Do family planning programs work? The answer is it depends. Results are mixed, but by most accounts, Bangladesh’ s family planning program is the world’s success story in this matter, and demonstrates that such programs can be highly effective despite considerable odds.

“During the early 1970s, American officials termed newly-independent Bangladesh a ‘basket case’… [and] demographers contributed to the dismal prognosis by using Bangladesh as an example of a society that was unlikely to initiate a transition from higher to lower fertility in the foreseeable future… Even optimists doubted that Bangladeshis would accept family planning on a large scale.”[v]

In an environment of little or no socio-economic development, but with concerted government support and enormous donor funding that provided information and contraceptive methods down to women’s doorsteps, contraceptive use quintupled in 20 years, from less than 10% of married women in 1970 to over 49% in 1990, and fertility fell by half (from 6.3 TFR to 3.3)[vi]. This success came at a cost– over $800 million per year in today’s dollars, largely from international donors.

Coercion can also result in rapid fertility decline. China initiated its one child policy in 1979, enforced by fines, forced abortion and sterilization.TFR fell from 3 in 1978 to 1.6 in 2012. Since few other countries have the desire (or the ability) to coerce their populations into low fertility, strong family planning programs seem the best option for speeding up fertility decline.

Manipulating Fertility Decline: How Low Can We Go?

Half of the countries worldwide now have sub-replacement fertility.[vii] The downside to this trend is shrinking labor forces – a factor which has led some governments to try to reverse the course and increase fertility. Romania banned abortion, and fertility briefly increased – until illegal sources of abortion appeared to meet demand. Other countries like France and Germany in the 1930s provided families with generous incentives ranging from free childcare to cash payments for additional children, but these actions did not produce substantially higher fertility. The relaxation of China’s one-child policy may result in higher fertility, but the effects will not be known for several years.

A sub-replacement fertility world seems inevitable, with fewer productive adults supporting larger numbers of the elderly. What this will mean for human welfare will depend on both the future productivity of working adults and living the expected living standards for their parents.

James Foreit, Dr. PH has worked in family planning program research and evaluation for over thirty-five years, and lived in developing countries for eighteen years. He is the author of books, manuals and journal articles that relate to family planning, HIV/AIDS, and maternal and child health. Dr. Foreit retired as a Senior Associate of the Population Council, New York in 2012. He currently consults and teaches courses in operations research techniques and scientific writing.

[i] United Nations, Department of Economics and Social Affairs, Population Division. 2013. World Population Prospects the 2012 Revision, Key Findings and Advanced Tables.

[ii] John Bongaarts and Robert G. Potter, Fertility, Biology, and Behavior, an Analysis of the Proximate Determinants, Academic Press, 1983, is the basic work on the proximate determinants.

[iii] Becker, G. S. and Lewis, H.G. 1973. On the Interaction Between the Quantity and Quality of Children, Journal of Political Economy, 81, S279 – S288.

[v] A. Larson and S.N. Mitra (1992). Family Planning in Bangladesh: an unlikely success story. International Family Planning Perspectives, 18, 123-129.

[vi] In recent years, Bangladesh has benefited from large-scale female employment in the garment industry and continued family planning support. In 2011, CPR had risen to 61% and TFR was near replacement at 2.3.

[vii]Defined as TFR < 2.1; see https://www.cia.gov/library/publications/the-world-factbook/rankorder/2127rank.html

==

==